Large Language Models (LLMs) are now widely used for text generation, analytics, programming, customer support, document processing, and many other tasks. However, many users face the same issue: the more complex the request is, the higher the chance of receiving a vague, incomplete, or even incorrect response.

The reason is simple: LLMs perform better not when solving a “big problem all at once,” but when working through a sequence of clearly defined steps. That is why task decomposition is one of the key principles for effective use of modern language models.

The Problem With One Large Prompt

A common mistake is sending everything in one prompt:

- the task description

- style requirements

- limitations

- input data

- expected output format

- additional conditions

As a result, the model must keep many requirements in context, create a plan, and generate the final output simultaneously. Even strong models may “cut corners”: skip details, misinterpret requirements, or hallucinate information.

This becomes even more noticeable when using mid-level models such as GigaChat or similar solutions.

What Task Decomposition Provides

Decomposition is an approach where a task is split into a chain of simple stages. Each stage is a separate prompt with a specific goal. The output of one stage becomes the input for the next one.

It often looks like this:

- The model analyzes the task and generates a plan.

- Then it extracts key entities or builds a structure.

- Next it generates the text based on that structure.

- After that it checks itself and fixes mistakes.

- Finally, it formats the output according to the requirements.

This approach is significantly more reliable than using one “universal” prompt.

Why Prompt Chaining Improves Accuracy

1. The Model Makes Fewer Logical Mistakes

LLMs often produce reasoning errors when they are forced to solve a complex task in one go. But if each step is limited to a narrow function (for example: “create a plan,” “extract key points,” “rewrite in a formal style”), the probability of mistakes drops significantly.

The model stops “guessing” the final answer and starts performing clear actions.

2. Each Stage Becomes Controllable

With a single large prompt, you cannot easily understand where the model failed: in interpreting the task, in reasoning, or in formatting.

Decomposition gives you full control:

- you can validate intermediate results,

- adjust input data if needed,

- rerun only a specific step instead of repeating the whole process.

This is especially important in business processes where mistakes can be costly.

3. Reduced Context Overload

When a task is split into stages, each prompt includes only the necessary information. The model is not overloaded with unnecessary details.

This is critical when:

- context length is limited,

- you use a budget model,

- the input data is large (documents, chat logs, reports).

4. You Can Use Simpler and Cheaper Models

One of the biggest advantages of decomposition is that it allows you to achieve “high-end model quality” even with mid-level models.

For example, GigaChat can produce strong results if you:

- ask it to build a structure first,

- then generate arguments,

- then assemble the text,

- and finally run a self-check step.

So quality depends not only on the model itself, but also on how well the workflow is organized.

5. The Model Can Be Forced to Review Itself

One of the most powerful techniques is adding a “review and improvement” stage.

For example:

- “Check the text for logical contradictions”

- “Find weak arguments”

- “Remove repetition and filler words”

- “Unify the writing style”

LLMs are especially strong as editors when they improve an already generated draft.

Decomposition as an Approximation of Real Thinking

Humans rarely solve complex problems instantly. Usually we:

- think about what needs to be done,

- create a draft,

- improve it,

- correct mistakes,

- and validate the final result.

Task decomposition forces the LLM to work in a similar way. This is exactly where the quality boost comes from.

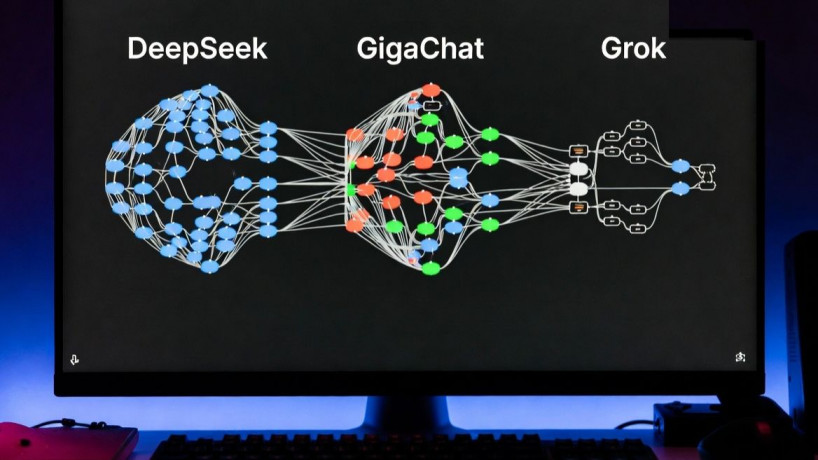

LLM Orchestration: Different Models for Different Stages

The next level is using different models at different stages.

For example:

- one model is good at analysis and structuring,

- another is better at writing,

- a third one is strong at verification and validation,

- a fourth is cheaper and suitable for routine tasks.

This approach is called LLM orchestration, where multiple models work as a “team” instead of one universal performer.

As a result, you can achieve the best balance:

- higher quality,

- lower cost,

- faster processing,

- predictable outputs.

Why This Matters for Business Use Cases

In business, LLMs are often used in processes where the key requirements are:

- stability of output,

- repeatability,

- transparency,

- error minimization.

A single large prompt rarely provides consistent results: the model may change structure, tone, or forget constraints.

Decomposition turns generation into a controlled pipeline:

input data → processing step → generation step → validation step → final output.

This becomes not just “chatting with AI,” but a real automation tool.

How Botman.one Solves Task Decomposition

The low-code platform Botman.one allows you to implement this approach without complex development.

Instead of manually copying outputs between prompts, you can build a step-by-step workflow:

- each step is a separate block,

- results automatically flow to the next stage,

- conditions can be applied,

- intermediate data can be saved,

- external sources can be connected.

Most importantly, Botman.one allows you to use different LLMs at each stage.

For example:

- analysis step can be done with GigaChat,

- text generation can be handled by another model,

- final editing can be performed by a more accurate LLM,

- classification tasks can be delegated to the cheapest model.

This makes it possible to build a true LLM orchestrator, where models are used efficiently and strategically.

A Practical Example of a Prompt Chain

Imagine you need to create a commercial proposal.

Single prompt approach:

“Create a commercial proposal based on this data…”

This often leads to weak output: too much filler text, poor structure, and unclear messaging.

Decomposition approach:

- Prompt 1:

“Extract the key product benefits and the client’s pain points.” - Prompt 2:

“Create a commercial proposal outline based on these benefits.” - Prompt 3:

“Write the proposal based on the outline. Style: business formal. Length: one page.” - Prompt 4:

“Reduce the text by 30% and remove repetitions.” - Prompt 5:

“Check persuasiveness and add a strong call to action.”

The final result will be noticeably stronger, even if you use a mid-level model.

Conclusion: Decomposition Turns LLMs Into Reliable Tools

Breaking tasks into stages provides:

- higher accuracy,

- fewer mistakes,

- process control,

- built-in validation,

- lower costs,

- flexibility in choosing models.

And platforms like Botman.one help turn this approach into a practical low-code system: building prompt chains, integrating multiple LLMs, and creating a full-scale AI orchestration workflow.

As a result, users get not just an “AI response,” but a controlled intelligent process that can be scaled and implemented in real business scenarios.